Machine learning has become one of the most influential technologies shaping the modern digital world. From recommendation systems and image recognition to language models and predictive analytics, machine learning applications rely heavily on powerful computing infrastructure. At the heart of this infrastructure are server GPUs, specialized processors that enable machines to learn from data efficiently and at scale. As machine learning models grow more complex and datasets become larger, server GPUs have emerged as essential components for accelerating artificial intelligence development.

A GPU, or Graphics Processing Unit, was originally designed to render images and graphics for gaming and visual applications. However, researchers soon discovered that GPUs excel at performing parallel computations, making them ideal for machine learning workloads. Unlike traditional CPUs, which process tasks sequentially, GPUs can execute thousands of operations simultaneously. This capability allows machine learning algorithms to process massive amounts of data much faster than conventional computing systems.

Machine learning relies heavily on mathematical operations such as matrix multiplications and vector calculations. Neural networks, which form the foundation of modern AI systems, require repeated calculations across millions or billions of parameters during training. Server GPUs are optimized to handle these operations efficiently. Their architecture includes hundreds or thousands of smaller cores that work together to perform parallel processing, dramatically reducing the time required to train models.

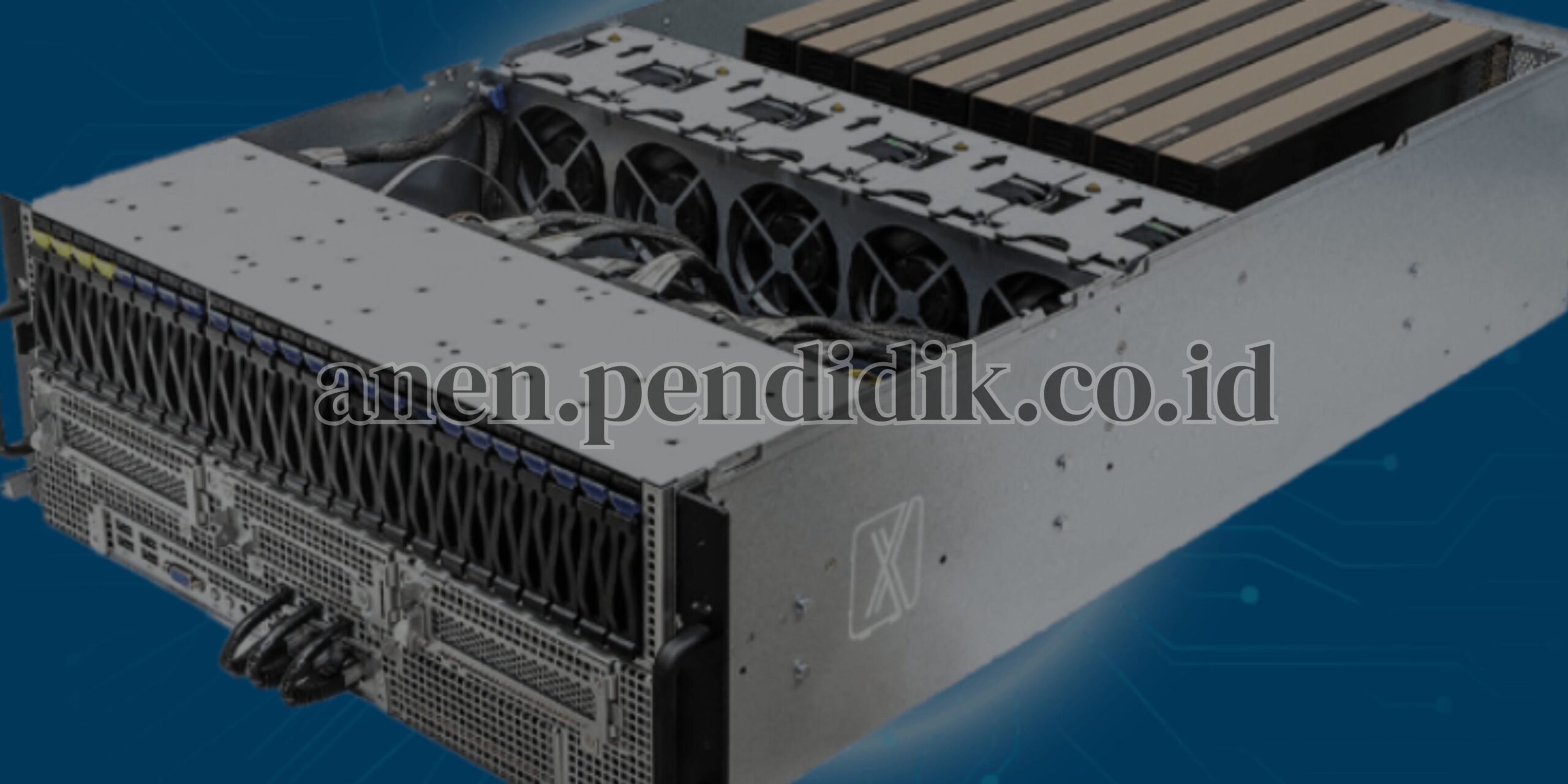

Server GPUs differ from consumer-grade GPUs in several important ways. While gaming GPUs focus primarily on graphics performance, server GPUs are designed for reliability, scalability, and continuous operation in data center environments. They include advanced cooling systems, error-correcting memory, and optimized drivers for scientific and enterprise workloads. These features allow server GPUs to run intensive machine learning tasks for extended periods without performance degradation or system instability.

One of the most important advantages of server GPUs is accelerated training. Training a machine learning model involves feeding large datasets into algorithms and adjusting model parameters through iterative optimization. Without GPU acceleration, training complex models could take weeks or months using CPU-based systems. Server GPUs reduce training time significantly, enabling faster experimentation and innovation. Researchers and developers can test multiple models, refine algorithms, and deploy solutions more quickly.

Memory architecture plays a crucial role in GPU performance for machine learning. Server GPUs typically include high-bandwidth memory that allows rapid data transfer between processing cores and memory modules. This high-speed communication prevents bottlenecks during computation. Large memory capacity is particularly important for training deep learning models, which require storing extensive datasets and intermediate calculations during processing. Insufficient memory can slow down training or limit model size, making advanced GPU memory systems essential.

Another defining feature of server GPUs is support for distributed computing. Modern machine learning workloads often require multiple GPUs working together across a cluster of servers. High-speed interconnect technologies allow GPUs to share data and synchronize training processes efficiently. Distributed training enables organizations to handle extremely large models that cannot fit within a single GPU’s memory. By dividing workloads across multiple GPUs, machine learning systems achieve faster performance and improved scalability.

Energy efficiency has become a major consideration in GPU server design. Machine learning workloads consume significant power, especially when training large neural networks. Server GPU manufacturers have introduced energy-efficient architectures that deliver higher performance per watt. Advanced power management systems dynamically adjust performance levels based on workload demand, reducing unnecessary energy consumption while maintaining high computational output.

Software ecosystems also contribute to the effectiveness of server GPUs in machine learning. Specialized libraries and frameworks are designed to take advantage of GPU acceleration automatically. Developers can write machine learning code without manually optimizing every operation for parallel execution. These frameworks distribute computations across GPU cores, manage memory usage, and optimize performance behind the scenes. This accessibility has played a major role in expanding machine learning adoption beyond research institutions into mainstream business environments.

Server GPUs are widely used across industries. In healthcare, GPUs enable medical image analysis and disease prediction models. Financial institutions rely on GPU-powered machine learning systems for fraud detection and risk analysis. Retail companies use GPUs to analyze customer behavior and deliver personalized recommendations. Autonomous vehicle development depends heavily on GPU servers to train computer vision models capable of understanding real-world environments. Even creative industries use GPU-based machine learning to generate images, videos, and digital content.

Inference acceleration is another important role of server GPUs. After a machine learning model has been trained, it must process new data quickly to generate predictions. This stage, known as inference, often requires real-time performance. Server GPUs can process thousands of inference requests simultaneously, making them ideal for applications such as online search engines, chatbots, and recommendation platforms. Optimized inference engines further enhance performance while reducing latency.

Cloud computing has expanded access to server GPUs significantly. Organizations no longer need to purchase expensive hardware to use GPU acceleration. Cloud providers offer GPU-enabled server instances that allow developers to rent high-performance computing resources on demand. This pay-as-you-go model has democratized machine learning by enabling startups and researchers to access advanced infrastructure without large upfront investments. Hybrid environments combining on-premise GPU servers and cloud resources are becoming increasingly common.

Cooling and thermal management are essential for maintaining GPU performance. High-performance GPUs generate substantial heat during operation. Data centers use advanced cooling techniques, including liquid cooling and optimized airflow systems, to maintain stable temperatures. Effective cooling ensures consistent performance and prolongs hardware lifespan, which is critical for enterprise environments running continuous workloads.

Security considerations are also important in GPU-based machine learning environments. Sensitive datasets used for training models must be protected from unauthorized access. Modern server GPUs support hardware-level encryption and secure computing environments that safeguard data during processing. Secure virtualization technologies allow multiple users to share GPU resources safely without compromising data privacy.

The rapid growth of generative AI has further increased demand for server GPUs. Applications such as large language models, image generation systems, and voice synthesis require enormous computational power during both training and inference. GPU servers provide the performance needed to support these advanced workloads. As AI models continue to expand in size and complexity, GPU technology continues to evolve to meet new computational challenges.

Scalability remains one of the strongest advantages of GPU-based server infrastructure. Organizations can start with a small number of GPUs and expand their systems as machine learning needs grow. Modular server designs allow additional GPUs to be integrated seamlessly into existing infrastructure. This flexibility ensures long-term adaptability as AI technologies continue to advance.

Looking ahead, the future of server GPUs for machine learning includes innovations such as specialized AI accelerators, improved memory architectures, and faster interconnect technologies. Manufacturers are developing chips specifically optimized for AI workloads rather than general graphics processing. These advancements aim to deliver higher efficiency, lower energy consumption, and faster performance for increasingly sophisticated machine learning models.

In conclusion, server GPUs have become the driving force behind modern machine learning innovation. Their parallel processing capabilities, high-speed memory systems, distributed computing support, and energy-efficient designs enable organizations to train and deploy AI models at unprecedented scale. As machine learning continues to transform industries and redefine technological possibilities, server GPUs will remain essential tools powering the next generation of intelligent applications and data-driven solutions.